Direct™ product manager Ian Bolton explores the impact of using software that has evolved from traditional print processes to drive digital inkjet presses as they advance to print faster, in higher resolution, a wider variety of colors and applications. In particular, Ian focuses on the impact that rising data rates have on the workflow:

Digital press software evolved from traditional print processes has already reached its limit. Digital presses are becoming higher resolution – most are moving from 600 dpi to 1200 dpi, quadrupling the data. They’re also becoming deeper, with up to 7 drop sizes – and these drops are being made from a wider variety of colors. Digital presses are also becoming wider, up to 4 meters wide, and faster, up to 1,000 feet per minute!

And what if you need to print where every item is different? For example, fully personalized – like curtains, flooring, wall coverings, clothing etc. All of these require software that can deliver ultra-high data rates.

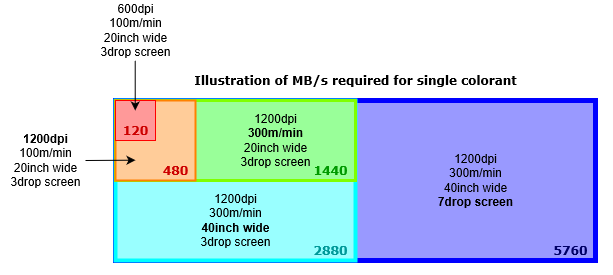

Let’s look at how those data rates scale up as digital presses advance:

If we start with 600 dpi, 20 inches wide, 3 drop sizes and 100 m per minute, then that’s 120 MBps per colorant, which is not too challenging. But once we move up to 1200 dpi, we’ve now quadrupled the data to 480 MBps, which is the read speed of all but the most bleeding-edge solid state drives today.

With printhead, nozzle and roller technology improving, the rated speeds also increase, so what happens when we go up to 300 m per min? It’s now 1.4 GBps and you will need one of those bleeding-edge solid state drives to keep up, bearing in mind you will now be writing as well as reading.

And if we go wider to print our wallcoverings at 40 inches wide, we’re now at 2.8 GBps … and we want our walls to look great close up, so we might be using 7 drop sizes, which takes us up to 5.7 GBps … and this is all just for one colorant!

Based on these numbers, it should be clear now that, for this generation of digital presses and beyond, a disk-based workflow just isn’t going to cut it: reading and writing this amount of data to disk would not actually be fast enough and would require ridiculous amounts of physical storage. This is where software evolved from traditional workflows hits a barrier: the data rate barrier.

To solve this we need to go back to the drawing board. It’s similar to the engineering challenge of moving from propeller-driven aircraft to jets that could break the sound barrier. Firstly, you need to develop a new engine and then you need to commercialize it.

So, if you’re looking for software to power your first or next digital press it’s going to need the right kind of software engine that isn’t based on disk technology so that you can drive your digital press electronics directly and smash through the data rate barrier. In other words, you need to go Direct.

To learn more about the impact of rising data rates and how you can futureproof your next digital press, visit our website to find out more about going Direct.

If you’re interested in calculating data rates take a look at this blog post where you can download your own data rate calculator: Choosing the class of your raster image processor

Further reading:

- Harlequin Core – the heart of your digital press

- What is a raster image processor

- Ditch the disk: a new generation of RIPs to drive your digital press

- Is your printer software up to the job?

- Where is screening performed in the workflow

- What is halftone screening?

- Unlocking document potential

- Future-proofing your digital press to cope with rising data rates

About the author

To be the first to receive our blog posts, news updates and product news why not subscribe to our monthly newsletter? Subscribe here