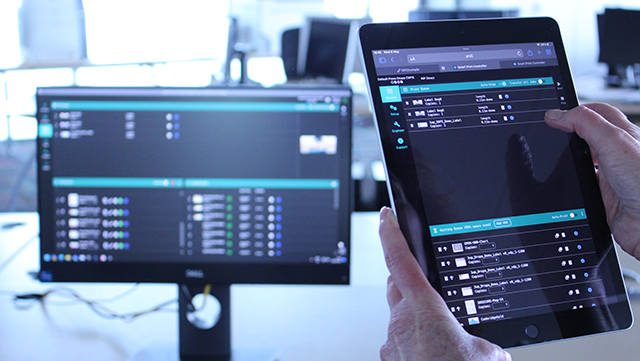

SmartDFE™ Partner Network member Vir Softech recently took the opportunity to demonstrate how easy and fast it is to develop a custom User Interface (UI) for the Smart Print Controller in SmartDFE, the Digital Front End for high-speed, single-pass inkjet presses. While SmartDFE includes a sample UI application that drives the Smart Print Controller, in today’s competitive landscape OEMs demand their own branded UI, reflecting their own style and color scheme, and with the ability to offer unique features.

One of the key features of SmartDFE is its integration with an OPC UA protocol – a communication protocol for industrial automation that easily connects your remote devices in the Cloud. Having that OPC UA support means it is compatible with various programming languages for example, C, C++, C# (.NET), Java, Python, and Delphi, enhancing adaptability in different development environments.

The team at Vir Softech decided to create a customized application based on a more web-based technology stack, compatible with multiple devices. It took them less than three weeks to develop, proving that creating a custom UI for SmartDFE can be quick, significantly reducing time to market.

If you’re interested to learn more, join us at drupa 2024 on the HYBRID Software stand 7a/D03, where the team from Vir Softech will be presenting this project. Check the HYBRID Software stand theater timetable for details or contact us directly to ensure you don’t miss your personal demonstration.

Further reading:

- Blog post: Streamling OPC UA integration with Traeger’s SDK

- Video: Inkjet printing in smart manufacturing

- Video: How to transform your inkjet business with Industry 4.0 and OPC UA

- Video: Short introduction to the OPC UA

Be the first to receive our blog posts, news updates and product news. Why not subscribe to our monthly newsletter? Subscribe here